0.22.1 Release Notes

In progressAfter a significant hiatus, development of Tic-tac-toe Collection has resumed.

The immediate future will be maintenance releases designed to bring my tooling and the libraries used back up to date.

There are some cool game changes in development, but they aren’t in this version. This version does contain significant changes to ads however.

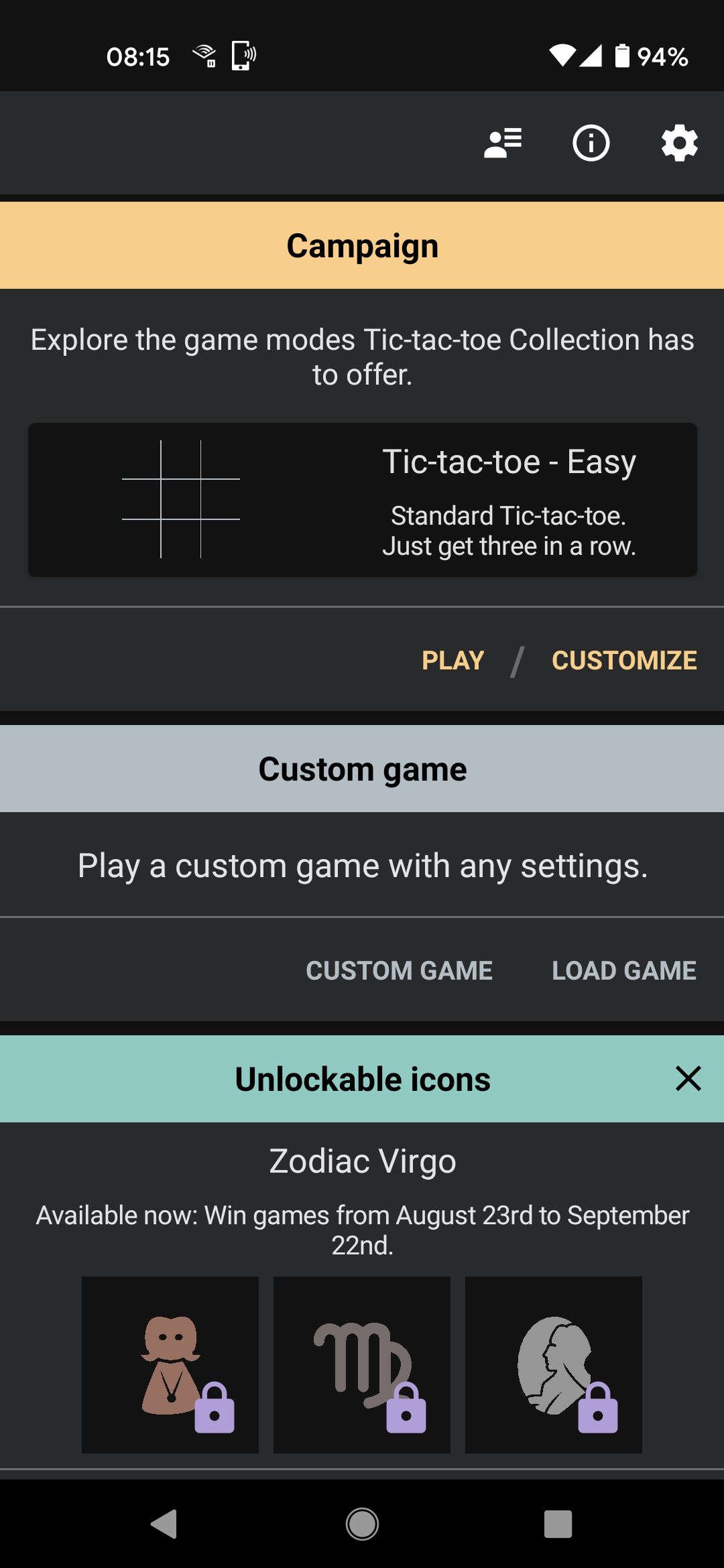

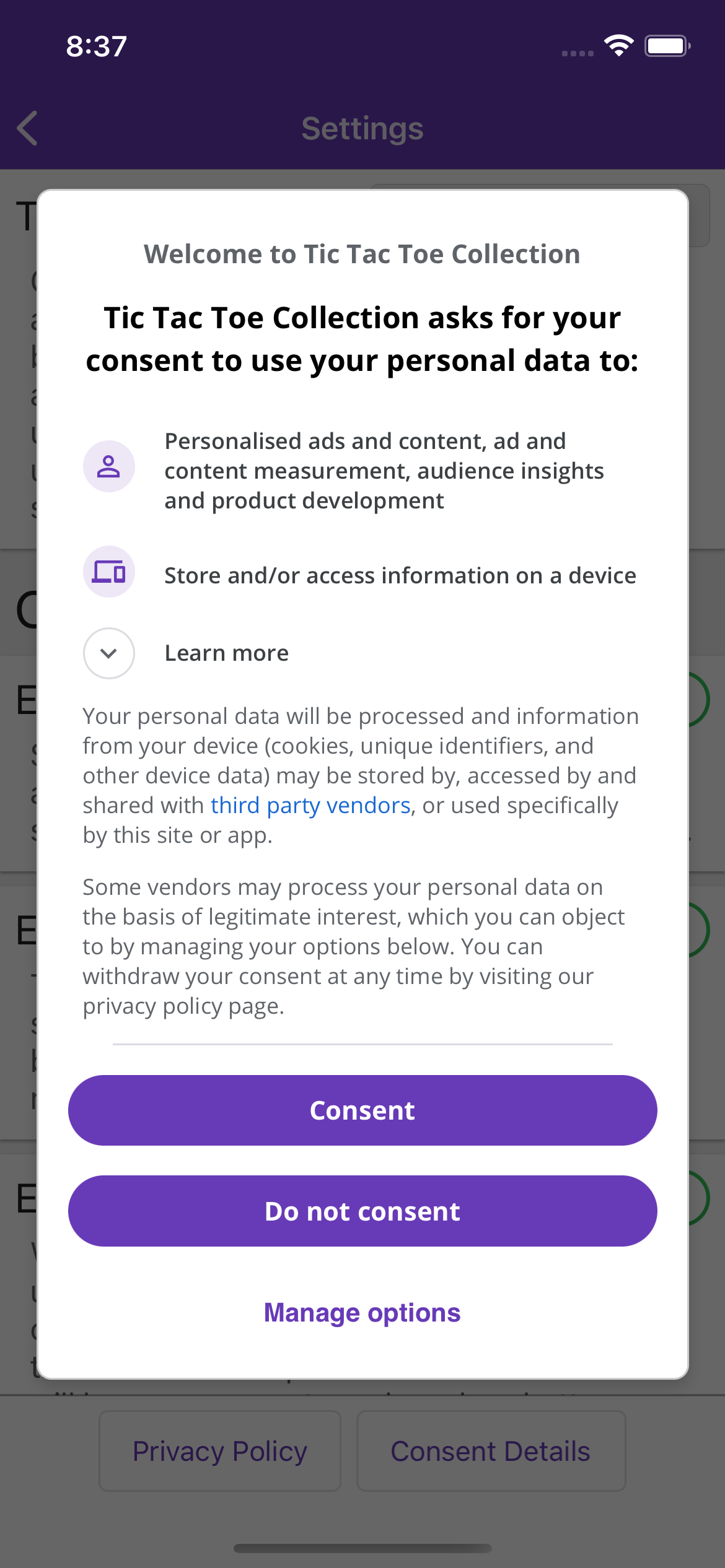

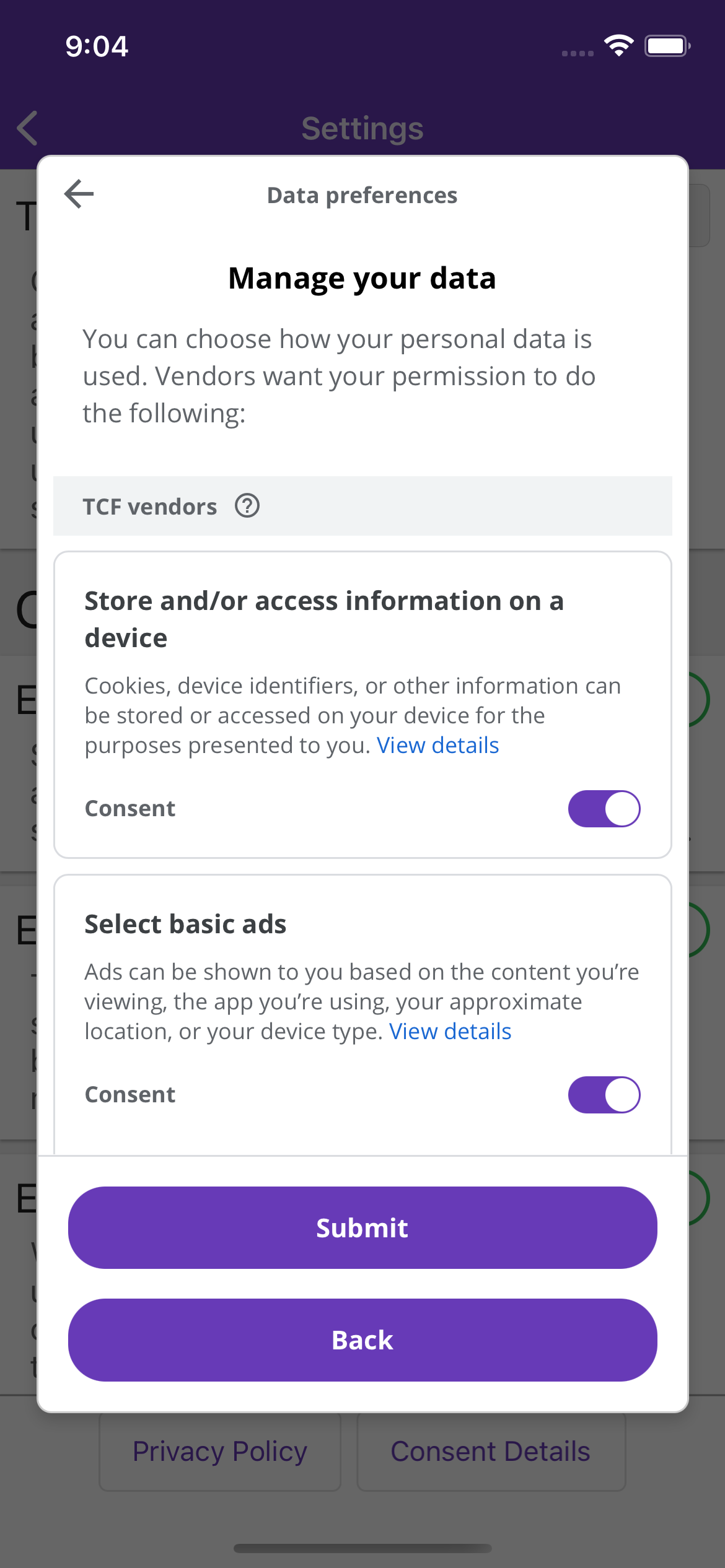

- Added Google User Messaging Platform consent flow for ads.

- Removed non-rewarded interstitial ads.

- Fixed campaign not saving after loading a complete game.

To coincide with the changes to ads and consent, the app privacy policy has been updated.

Actual change to policy

Analytics is no longer opt-out. For users subject to the GDPR, it is made explicit that data collected this way is done on the basis of legitimate interest and not consent.

Other changes

There is a summary section that is intended to be as concise and human readable as possible.

Website changes

I have added a privacy policy specifically for the website. I have also removed Google Analytics and since the only remaining cookies are strictly necessary (as defined in the EU ePrivacy Directive), I have removed the cookie consent banner.

There are big changes in 0.22 with how ads and consent is managed, at least for users subject to the GDPR.

Firstly a simple change: interstitial video ads have been removed. These previously could appear at the end of each game, but they will no longer.

The bigger change is the introduction of Google’s User Messaging Platform to manage consent. This will combine the previous separate dialogs that would appear about consent for analytics and consent for displaying ads into a single flow.

There are advantages and disadvantages to this, which I’ve expanded on over on my personal blog.

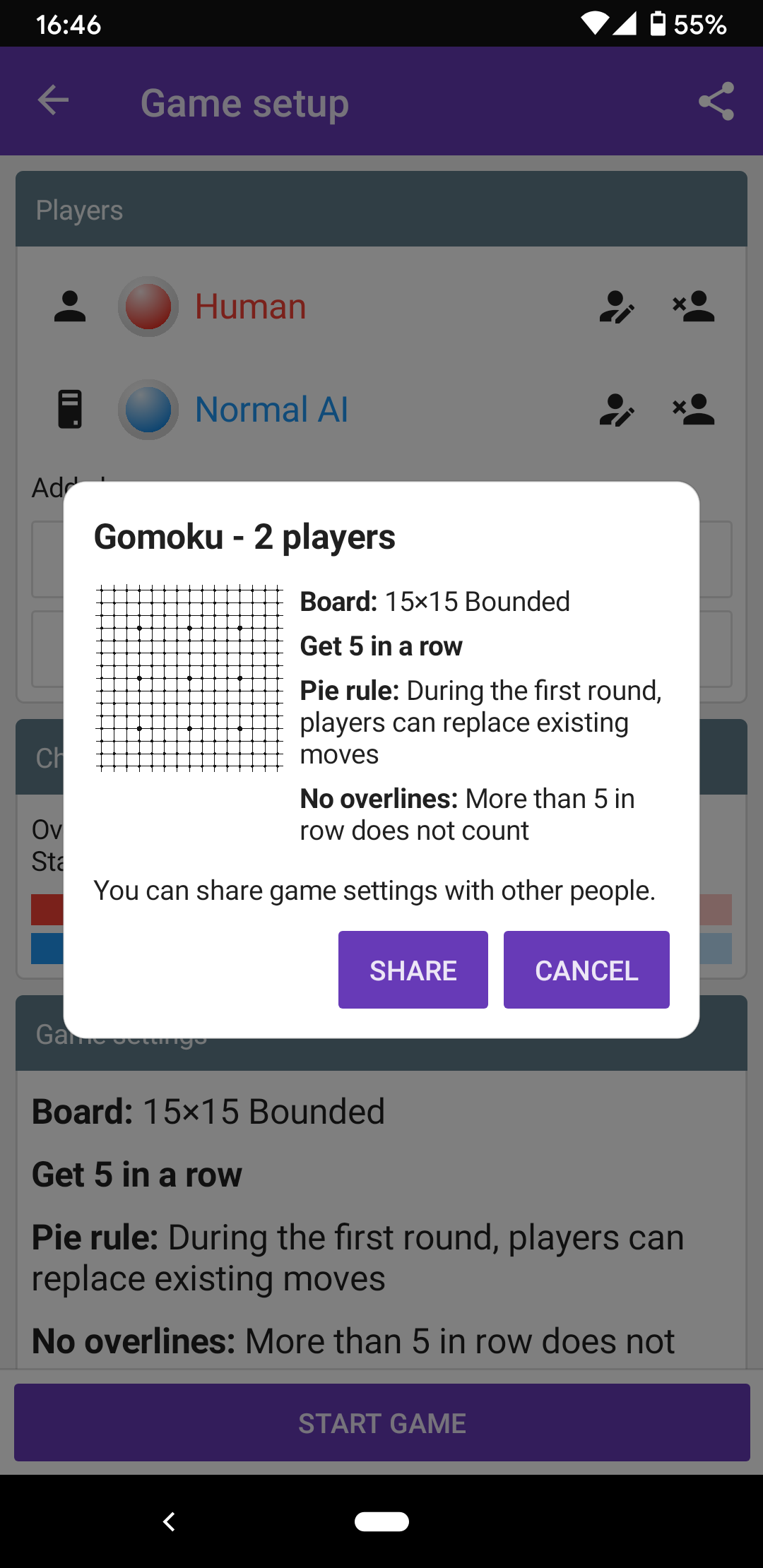

For the last few version I’ve been implementing the ability to share game modes. Actually triggering a share was made user facing in 0.20 but the game could load them (with varying degrees of success) before then.

It was a multiple step process because it more-or-less requires a website to exist. For example if you visit this page it includes a link to “try opening in the app”. If you click the link on desktop it will take you back to the same place since that is the fallback page for the sharing link.

An additional feature I developed at the same time as sharing is the ability to embed little game mode widgets in the site.

Another thing I’ve been doing that uses the sharing link is creating YouTube videos of the interesting game modes feature the AI.

- Added new options to modify game to allow a variable number of human players.

- Prevent AI from playing when popups displaying.

- Fixed games not showing results with no humans.

- Fixed AI not understanding misère.

- Updated UI icons.

- Added settings sharing.

- Changing to a non-bounded topology now automatically enables “allow cell reuse”.

- Added some network information to local network screen.

I have tried to be aggressive about keeping the size of the app down as it has grown. I made a specific decision early on to avoid image assets and I went through quite some hassle to enable build features to keep the code small.

A while ago Google introduced a new way to package and distribute Android apps called Android App Bundle.

Previously when distributing an Android app, the developer would produce an Android Application Package (often called an APK, based on its standard file extension) that included everything needed to run on a variety of Android devices. This could include things like assets at different resolutions and code for different device architectures that were not all needed on any single device.

It was possible to produce different APKs that were more specialized but it was quite a bit more effort to setup a build pipeline to produce them and distribute them. With App Bundles, Google Play does this for you. An App Bundle contains the same wide array of content for different devices, but Google Play generates a device specific APK for each device that downloads it.

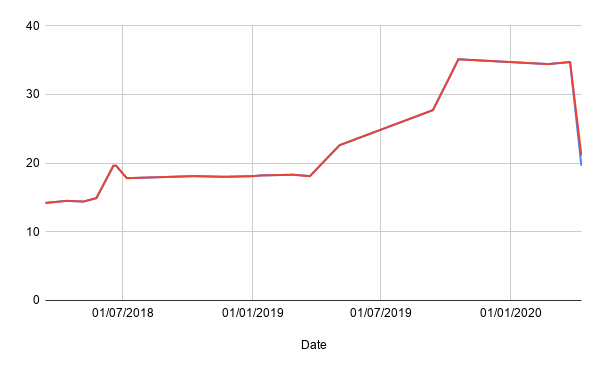

The result is quite staggering - much more than I expected. The graph here shows the size of the app in MB over time, with the conspicuous drop at the end when I switched to using App Bundles. (One interesting thing to note is that the line splits into two at the end. The size is now slightly variable based on device and the split represents the smallest and largest APKs possible).

The Android version is now distributed using an App Bundle on Google Play.